A trip to Vegas, an evil clown, a bizarre coin, and a minister. What could go wrong?

I commented in a footnote of an earlier post that one of my favorite – no, morally obligated – questions to ask when faced with imposing assertions or risky plans is: “How do you know that?” If we could but practice the discipline to ask this question more often with greater courage and rigor, I think it would lead us to less intrusive and ineffective public policy decisions, assertions of power over those we distrust, or costly commitments to action based solely on untested gut feel or intuition alone. Please, don’t misunderstand me. I think intuition or gut feel plays a very important place in many areas of life, including business, science, mathematics, policy making, cooking, career choices, and a hundred more. In fact, I’m pretty sure that all progress and innovation occurs, in part, because of an initial intuition or hunch that arises in the mind of an interested inquirer. The question, though, is what do we do with such a hunch when we face critical or risky decisions? Do we Farragut ahead without regard to the possible risks, damning the torpedoes, or do we attempt to answer the question, “How do I know that?” and consider the implications of our intuition before we act? Fortunately, we have a tool, Bayes’ Theorem (named after the Reverend Thomas Bayes, who left this intellectual gem behind after his death in 1761), to integrate our intuition and systematic inquiry in a logically powerful way. For the sake of my discussion here, I’m not going to demonstrate a derivation of his beautiful theorem. You can read about that on your own. For now, I state the theorem in a simple way that will let us explore its use to aid in satisfying our question, “How do I know that?” The theorem looks like this:Or, in more natural language: our improved understanding of the states of a system under investigation after we do some experiments is the conditional probability of the observed data in any state, normalized by the weighted probability we could observe the data across all states. For the sake of our discussion, I write it more succinctly and informally as:Improved understanding about specific states of nature = (Initial hunches about specific states of nature) × (Likelihood we would observe some collected evidence resulting from the state of nature given that each state were the case) ÷ (Weighted probability that our collected evidence could have been produced by the conditions of our initial hunches)

Improved Understanding = (Hunch × Likelihood) ÷ (Weighted Probability of Observed Evidence)

As long as we can describe our initial hunch in terms of clearly identified, exhaustive, non-overlapping states or outcomes according to degrees of belief that each might be the case, and those degrees of belief follow the Rules of Probability, then we’re in a good place to begin learning. Then, as evidence comes in, it will corroborate which parts of our Hunch are most likely to be the case and which parts can be discounted. Suppose you were offered the opportunity to gamble with Bizarro the Magic Clown in a Las Vegas nightclub act with a series of coin tosses. Although you’d like to trust Bizarro (because he was your favorite Saturday TV show host before his career washed up), and you think it’s probably difficult to make a coin actually biased from even odds given its symmetry, you consider that there might be some chance that the coin is biased to his favor. After all, this is show biz with a strange clown. So, you consider the states that describe the inclination of the coin to favor heads (the bias). If the coin operates according to the way we naturally expect a coin to do, you say the bias is 0.5. If you think Bizarro could be sneaky, such that he weighted the coin at the verge of believably fair but still weighted toward heads, you might say the bias is 0.7. Likewise, if you think the he weighted the coin toward tails, you might say the bias is 0.3. Now you have taken into account the range of possible states of behavior the coin could take on. But how likely is it that Bizarro has successfully weighted the coin to his favor? This is where your hunch comes in. The now taciturn Reverend Thomas Bayes, giving people the cognitive tools to combat evil clowns since 1764. (Image obtained from Wikipedia.)

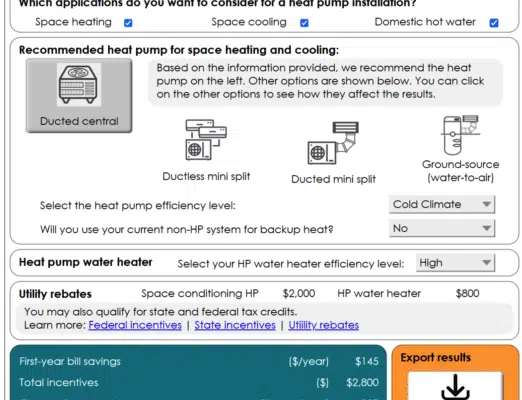

Without any more information about the coin than merely the range of possible biases, you would logically say that the probability of each bias state is 1/3. But you do have a little more information than just the range of states, though. Since you think it’s difficult to bias a symmetric coin, you increase your belief about the evenly weighted state to 0.4 and simultaneously decrease your beliefs about the weighted states to 0.3. You’re not quite saying that you have insufficient reason to state anything other than equal degrees of belief for the biases, but you’re in the neighborhood. Note that your degrees of belief must sum to 1, but the values associated with the biases don’t have to sum to 1*. The information about the biases are merely descriptions about the possible states, not the likelihood of their being the case. Now you state the information you have and the degrees of belief into a Hunch model:

The now taciturn Reverend Thomas Bayes, giving people the cognitive tools to combat evil clowns since 1764. (Image obtained from Wikipedia.)

Without any more information about the coin than merely the range of possible biases, you would logically say that the probability of each bias state is 1/3. But you do have a little more information than just the range of states, though. Since you think it’s difficult to bias a symmetric coin, you increase your belief about the evenly weighted state to 0.4 and simultaneously decrease your beliefs about the weighted states to 0.3. You’re not quite saying that you have insufficient reason to state anything other than equal degrees of belief for the biases, but you’re in the neighborhood. Note that your degrees of belief must sum to 1, but the values associated with the biases don’t have to sum to 1*. The information about the biases are merely descriptions about the possible states, not the likelihood of their being the case. Now you state the information you have and the degrees of belief into a Hunch model:

Hunch(B1=0.3, B2=0.5, B3=0.7) = [30%, 40%, 30%]

Reminding Bizarro of the fond memories you hold for him from youth, you ask him for the favor of tossing the coin, say, ten times, allowing you to record the outcomes. You observe seven heads and three tails. While the outcomes match one of your assumptions about the bias of the coin (B3=0.7), leaning in the direction of heads, you realize that the small number of samples you took might not be enough to warrant an immediate conclusion without further investigation, so you continue your stroll with the good Reverend. Now, you have good reason to believe that the coin tosses were independent because your dear ol’ Mom performed them (reluctantly, because she doesn’t approve of your gambling, by the way) with the care only a mother can provide. Given this independence assumption, you press on to calculate the Likelihood. The Likelihood represents the probability that you could observe the acquired Evidence if each possible bias state really were the case. So, if the coin really was weighted to tails (B1=0.3), the Likelihood that you would have observed seven heads and three tails through independent tosses isLikelihood(B1=0.3) = prob(heads)7 * prob(tails)3 = (0.3)7 * (1-0.3)3 = 7.5 × 10-5

Repeating this calculation for each of the remaining bias states, you getLikelihood(B1=0.3, B2=0.5, B3=0.7) = [7.5 × 10-5, 9.8 × 10-4, 2.2 × 10-3]

All that remains is to calculate the Weighted Probability of Observed Evidence and finally the Improved Understanding. In this particular case, you find the Weighted Probability simply by summing the multiples of the pair-wise elements of the Hunch Model with the Likelihood:Weighted Probability of Observed Evidence = (30% × 7.5 × 10-5) + (40% × 9.8 × 10-4) + (30% × 2.2 × 10-3) = 1.1 x 10-3

Now, given your initial Hunch, the Likelihood that the evidence you collected could have occurred were any of the bias states in play, and the Weighted Probability of Observed Evidence, you calculate the Improved Understanding for the first bias (B1=0.3) asImproved Understanding(B1=0.3) = (30% × 7.5 × 10-5) ÷ (1.1 × 10-3) = 2.1%

Repeating this calculation for the remaining bias states, you getImproved Understanding(B1=0.3, B2=0.5, B3=0.7) = [2.1%, 36%, 62%]

The structure looks like your initial Hunch model, but notice now how your Hunch has shifted. You have all but eliminated a bias towards tails. Although your tendency to think the coin is fair reduces just a little bit, your belief in the coin’s bias toward heads has doubled. From these results, then, you infer that Bizarro is likely a Siren luring you to the rocks of ruin. How do you know that? You know it because the range of possibilities and your associated degrees of belief about them in your initial Hunch updated to nominally exclude one possibility (i.e., the coin behaves with a bias of 0.3) and to strengthen your belief in those that remain. You still don’t know the bias with certainty, but you know better than you did before. More experiments will likely strip away more unnecessary possibilities to leave those that best explain reality. My goal here is to demonstrate how the structure of Bayes’ Theorem can help, both informally and formally, to support our quest to answer the question, “How do I know that?” When we combine our initial intuition with evidence that reduces the range of possible outcomes from that intuitive model, we are propelled to accept the remaining outcomes as a more legitimate explanation with a higher degree of confidence. Herein lies a tale of caution, too, for those times when we bet the farm on singular beliefs. Statements of belief held with certainty can be overwhelmed very quickly, embarrassingly or disastrously so, by a single example of disconfirming evidence, as erstwhile prophet Harold Camping witnessed not once, but twice, in 2011. By backing away toward a more forgiving skepticism (i.e., permitting a range of outcomes with associated degrees of belief), Bayes’ Theorem provides us a means not only to permit a humble assessment of what we actually know and intuit, but also a means to learn and progress to our benefit within that humility. *Notice, also, that your Hunch is an informed one, not an arbitrarily willy-nilly one. You based it on the actual possibilities of the system under consideration. It’s not as if you started with Hunch(B1=0.3, B2=oranges, B3=0.7) = [30%, 40%, turtles].